Clinical diagnosis remains the cornerstone of medical practice, and paradoxically, its most fallible step. Even in the era of continuing technological advances and newer imaging techniques, diagnostic errors continue to account for a substantial share of preventable harm in the healthcare sector. The reasons are well known: fatigue, cognitive bias, incomplete information, and the overwhelming influx of digital data that no clinician can absorb in real time. Artificial intelligence (AI), once seen as a distant adjunct, now stands at the threshold of transforming this problem by parsing information at a scale, speed, and depth far beyond human reach.

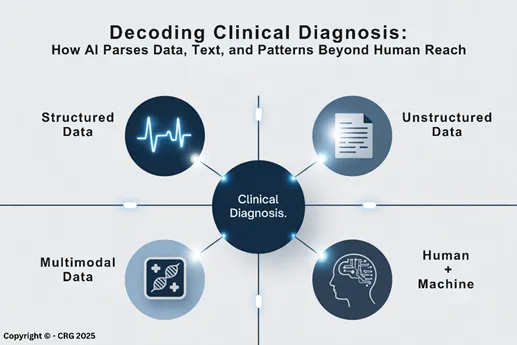

Modern clinicians face an impossible arithmetic. A single hospitalised patient may generate more than a million data points per day, spanning vital signs, laboratory values, imaging data, medication changes, and continuous monitoring feeds. To this, one must add the narrative richness and inconsistency of free-text clinical notes, discharge summaries, and consultation reports. No human brain, regardless of education, training, diligence or experience, can process such a magnitude of data comprehensively or continuously. Diagnostic reasoning, by necessity, becomes a selective act of attention, the problem being that what is overlooked may often determine clinical outcome. In this context, AI intervenes not as an oracle, but as a form of cognitive augmentation. Its promise lies in re-engineering how information is perceived, interpreted, and prioritised, transforming noise into insight and uncertainty into pattern recognition (FIGURE).

Machine learning (ML), the backbone of modern AI, thrives where data is structured, such as numbers, categories, and trends. In this realm, algorithms can detect subtle patterns in physiologic data that herald disease long before it becomes clinically visible.

Take sepsis, one of the most lethal and diagnostically elusive syndromes in critical care. Traditional detection relies on vital-sign thresholds and laboratory abnormalities that often appear late. By contrast, machine-learning systems such as the Targeted Real-Time Early Warning System (TREWS) at Johns Hopkins and the Epic Deterioration Index continuously analyse thousands of variables, recognising combinations of changes that precede overt deterioration by up to 48 hours. In multicenter evaluations, these tools have reduced sepsis-related mortality and shortened hospital stays, serving not as replacements for clinical judgment but as early-warning companions.

A similar transformation is underway in cardiology. The Mayo Clinic’s AI-ECG algorithm can detect previously unrecognised left ventricular dysfunction, an early sign of heart failure, simply from the electrical patterns of a routine ECG. Studies involving tens of thousands of patients have demonstrated diagnostic accuracies comparable to those of echocardiography, enabling opportunistic screening in primary care, emergency departments, and even wearable devices. Such examples illustrate AI’s ability to extract hidden meaning from the mundane, turning ubiquitous, inexpensive tests into predictive instruments.

If structured data reveal patterns, unstructured data hold stories. For decades, the narrative content of medical practice, the patient’s history, the clinician’s impressions, the nuances of radiology or pathology reports, has remained largely invisible to machines. This is changing rapidly through Natural Language Processing (NLP), which allows AI systems to read, interpret, and synthesise text with near-human fluency.

In practice, NLP can now extract diagnostic signals buried within millions of clinical notes. Algorithms trained on electronic health records have identified undiagnosed conditions ranging from familial dyslipidemia to early dementia, purely from patterns of word associations and context. At Stanford University, NLP tools have been used to detect patients at high risk for suicide or opioid misuse, capturing linguistic cues of despair or dependency long before they appear in structured data fields.

Imaging, long the visual frontier of medicine, has become a linguistic one as well. AI systems capable of parsing imaging reports can automatically flag discrepancies between diagnostic conclusions and clinical impressions, alerting physicians to follow-up actions that might otherwise be overlooked. In large health networks, this kind of automated “cross-checking” has begun to close the loop between interpretation and intervention. The topic is of such importance that we will address it in a more expansive way in a future article dedicated to the impact of AI on Neuroimaging.

The next generation of diagnostic AI integrates multiple data streams, clinical notes, laboratory results, imaging, and physiologic signals into unified predictive models. These multimodal systems mirror the way physicians reason: synthesising disparate evidence into a coherent narrative of disease.

DeepMind’s retinal-analysis algorithm exemplifies this convergence. By examining retinal photographs, it not only identifies diabetic retinopathy and macular degeneration but also infers cardiovascular risk profiles, including blood pressure and smoking status, insights traditionally requiring invasive or laboratory data. Likewise, algorithms analysing chest X-rays can now estimate a patient’s chronological age, bone density, or even future risk of lung cancer years before radiographic lesions appear.

At the other end of the diagnostic spectrum, AI models have demonstrated proficiency in integrating genomic data with clinical phenotypes to uncover rare diseases. By linking subtle facial features from photographs with genomic variants, these systems have accelerated diagnosis in genetic disorders that previously required years of specialist evaluation.

Even today’s most sophisticated AI systems are constrained by the linear architecture of classical computing. Processing millions of diagnostic variables still takes time, energy, and cost. Quantum computing promises to eliminate this bottleneck. By leveraging qubits capable of existing in multiple states simultaneously, quantum algorithms can evaluate countless diagnostic permutations in parallel, collapsing vast problem spaces into instantaneous outputs.

While still experimental, early work in quantum machine learning has shown potential to revolutionise pattern recognition in imaging, proteomics, and complex physiologic modeling. Hospitals already experimenting with quantum-enhanced AI foresee a future where diagnostic computation happens in real time, integrated seamlessly with bedside monitoring, imaging consoles, and laboratory networks.

Despite its sophistication, AI is not a substitute for the diagnostician. It lacks empathy, clinical context, and the moral framework required for patient-centred decision-making. What AI provides is cognitive amplification, a diagnostic safety net that captures what human perception may miss and prioritises what human cognition must consider.

In real-world settings, AI’s greatest strength lies in triage and augmentation. Radiologists now use AI to pre-read mammograms or chest CTs, flagging suspicious lesions for immediate review. Pathologists rely on AI-enhanced slide analysis to quantify tumor infiltration or detect mitotic figures invisible to the naked eye. Emergency physicians benefit from predictive dashboards that rank patients by risk, enabling more efficient allocation of attention in crowded departments. These systems do not dictate decisions, they shape priorities, focusing human expertise where it matters most.

Importantly, studies have shown that clinician-AI collaboration consistently outperforms either alone. In dermatology, hybrid models combining AI screening with physician review achieve diagnostic accuracies exceeding 95%, a figure neither can sustain independently. The message is clear: augmentation, not automation, defines the future of diagnosis.

With power comes complexity. The integration of AI into diagnostic workflows raises pressing ethical and operational questions. How transparent should algorithmic reasoning be? Who is accountable when an AI-informed decision goes wrong: the clinician, the institution, or the developer? And how do we prevent bias embedded in training data from amplifying health disparities?

Hospitals adopting AI-based diagnostic systems are learning that technology alone does not guarantee accuracy or fairness. Bias can enter from unbalanced datasets, overrepresenting one demographic, disease stage, or geography, and yield inequitable results. Addressing this requires continuous model retraining, validation across diverse populations, and the establishment of AI governance frameworks that combine medical, ethical, and informatics oversight.

Equally critical is clinician trust. AI adoption falters when users view systems as opaque or intrusive. Transparency, offering interpretable outputs and clear explanations for diagnostic suggestions, builds the confidence needed for integration into clinical reasoning. Institutions leading in AI implementation have recognised that training, feedback, and shared accountability are as vital as the algorithms themselves.

The path from algorithm to bedside is rarely straightforward. Successful integration demands alignment between data infrastructure, clinical workflow, and leadership culture. Early pilots often reveal that the greatest barriers are not technical but organisational: fragmented data silos, inconsistent documentation, or resistance to change.

Forward-thinking hospital systems now establish AI stewardship teams, multidisciplinary groups blending clinicians, data scientists, and IT specialists, to ensure that diagnostic algorithms are validated, updated, and contextually relevant. Some organisations have begun embedding AI directly into their electronic medical record (EMR) systems, transforming passive databases into dynamic diagnostic partners. Others deploy AI command centers that aggregate hospital-wide data to predict patient flow, identify deteriorating patients, and coordinate resource utilisation.

The operational payoff is tangible: fewer missed diagnoses, faster recognition of deterioration, and improved clinician efficiency. In an era where diagnostic accuracy directly intersects with reimbursement, liability, and patient satisfaction, AI’s contribution extends well beyond the bedside; it reshapes institutional performance metrics.

The history of medicine has always been the story of tools extending human capability: the stethoscope, the microscope, the CT scanner. AI continues this lineage, but on a scale previously unimaginable. It listens to every data source, reads every report, sees every pixel, and remembers every outcome. Properly designed, it transforms the chaos of modern information into the clarity physicians need to act with confidence.

In this light, AI is not an end in itself but a means to restore diagnostic focus in a data-saturated age. The clinician’s art remains irreplaceable, the synthesis of evidence, intuition, and empathy, but its reach is now amplified by a partner that never sleeps, never forgets, and learns from every patient encountered. Together, humans and machines forge a new paradigm: one in which diagnostic excellence is not limited by biology but expanded by intelligence (FIGURE).

NOTE: This is the first in a series of four articles that comprehensively cover the different dimensions and the forecasted outcome of AI in medical practice.