Artificial intelligence techniques are developing rapidly alongside the increase in big data availability. These methods will have an important place in the future of critical care and emergency medicine, to assist in diagnosis, patient management, and prediction of complications and help as we move toward ever more personalised patient care.

There is no doubt that artificial intelligence (AI) will have an important place in the future of critical care and emergency medicine. The rapid development, availability, and use of less invasive monitoring systems over recent years, alongside increases in computing power and data storage are facilitating the collection and analysis of vast amounts of patient information. Simultaneously, AI technology is advancing, such that this “big data” can be used to recognize patterns and associations among variables and outcomes of interest.

AI will have three major applications in the critical care field, to assist in diagnosis, treatment, and prediction of complications.

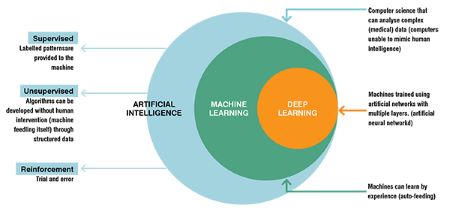

In the medical context, most AI approaches employ machine learning techniques in which algorithms are used to classify or group data or to make predictions about certain outcomes including disease diagnosis and prognosis. Machine learning can be subdivided into two broad methods: supervised and unsupervised machine learning, and reinforcement learning (Figure).

Supervised machine learning is the most widely used method at the present time. Data sets that are already labelled to be associated with a specific event or outcome are used to “train” computer systems to classify data correctly. Some straightforward examples that have been employed include training computer algorithms to interpret certain types of X-ray, to differentiate cutaneous cancers, and to interpret fundal changes in the eye. Supervised machine learning also enables the computerised system to determine the best therapeutic plan, and predictive modelling can be used to foresee the chances of response to treatment.

Unsupervised machine learning enables the computer algorithm to identify previously unrecognised patterns and clusters within patient populations. This may help to discover previously unknown predictive and/or therapeutic factors; for example, this approach has been used to identify subsets of patients with sepsis and with acute respiratory failure. Latent class and latent profile analysis are the most widely used unsupervised machine learning techniques for this purpose. The limitation of this approach is that the identified groups may not necessarily be clinically meaningful so that some clinical interpretation is needed. The ‘black box’ nature of this approach, in which the reason for the groupings achieved is unknown, can also be concerning.

In reinforcement learning, algorithms are trained by trial and error, with “rewards” being given for decisions giving a positive outcome, and a “penalty” for decisions that have a negative outcome. This approach is particularly suited to optimise the timing, dose, and duration of interventions.

Neural networks are a more complex type of machine learning model designed to identify complex relationships between input variables and outcomes and can be used in both supervised and unsupervised machine learning. Neural networks effectively reproduce layers of artificial “neurons” or nodes with an input layer (which can include a large number of input variables, including physiological or laboratory markers), one or several (so-called deep learning) interconnecting hidden layers, and an output layer. Limitations again include the ‘black box’ aspects of this approach and the difficulty such systems have determining and taking into account clinical priorities.

AI will increasingly help to identify the best therapeutic interventions for each specific situation. There have been many negative randomised control trials (RCTs) in critical care medicine, showing no impact of the intervention tested on outcomes. The negativity of these trials is largely explained by two factors. The first is the choice of mortality as an end-point. Mortality would initially appear to be a ‘strong’ end-point, but is actually affected by many factors other than the studied intervention, so that the effect of the tested intervention is ‘buried’ among the effects of many other factors. The second is the heterogeneity of the patient populations, meaning that although some patients may benefit from the intervention, others may be harmed; however, it may be difficult to differentiate these two patient populations. One example of this dilemma is the administration of blood transfusions to the critically ill. In some patients, the likely benefit is clear, whereas in others unwanted adverse effects may outweigh any possible (limited) benefits. RCTs on transfusions have provided little help in determining optimal transfusion guidelines because randomisation has largely been based on a haemoglobin threshold, typically 7 versus 9 g/dL. However, the decision to transfuse should be based not only on the haemoglobin levels but also on other elements, such as the presence of associated respiratory distress, coronary artery disease, frailty, and others. Analysis of big data could help better identify those patients who will benefit from a transfusion. The same question may apply to the administration of albumin, as again RCTs have been based on albumin concentrations, whereas other factors, such as risk of further complications, the presence of sepsis, the presence of liver disease, the magnitude of existing oedema, and so on, should also be taken into account. Other therapeutic applications for AI that may be valuable include determining the optimal arterial pressure level that should be targeted in individual patients, as well as the right timing of certain therapeutic interventions, such as vasopressin or corticosteroid administration in septic shock.

AI is now being used to inform clinical decision support systems, based on the big data provided from large numbers of electronic health records (EHRs), which offer information on various aspects of patient demographics, laboratory, and microbiology tests, imaging results, physical examination, progress notes, consultant reports, therapeutic interventions, and so on. An important limitation to the use of such information at present is the lack of common terminology in narrative texts, such as doctors’ notes. For example, some patients may be labelled as ‘septic’ by some physicians but simply as ‘infected’ by others. Similarly, the mode of mechanical ventilation does not always follow an internationally recognised vocabulary. Natural language processing methods can be used to interpret narrative text and speech and extract in a format appropriate for machine learning.

Predictive modelling AI techniques enable the recognition of patterns that are associated with an increased risk of clinical deterioration or development of complications such as sepsis. Importantly, deterioration outcomes should not be restricted to survival or death but include other indications of morbidity such as organ dysfunction (which may or may not eventually result in death in the absence of intervention). These techniques can be used on the regular floor to rapidly identify patients who may need special attention, additional tests, and perhaps admission to the intensive care unit (ICU).

Patients on the regular floor, as in the ICU, are often monitored using several different systems, and the different variables can be integrated to create more accurate predictions. It is also important to evaluate trends and avoid the dichotomous separations of the past, in which a single alarm would go off when the heart rate increased, e.g., above 110/min or the systolic blood pressure fell below, e.g., 100 mmHg. Alarms based on single variables may also be limited by artefacts: the most common example of this is the SpO2 signal being altered or cancelled by displacement of the probe. Real respiratory deterioration should be recognised not only by a fall in SpO2 (which never occurs alone), but also by a concurrent increase in heart rate and respiratory rate. Interconnectivity between monitoring systems is still limited today but, increasingly, smart monitors and intelligent systems will combine the different variables and various types of information and be able to continuously update the models as new data become available. The calibration of the models is of paramount importance as we do not want the systems to be too sensitive (with false alarms resulting in so-called ‘alarm fatigue’), but at the same time, we do not want to miss deterioration that should be identified and could be treated if noticed soon enough. The systems tested in hospitals so far have resulted in mixed degrees of enthusiasm. Obviously, there is a long road before they can be reliably applied worldwide.

At present, AI systems should not replace the bedside healthcare staff, but are already contributing to enable adequate and early decision-making for diagnosis and treatments. AI is ideally placed to evaluate the multiple possible combinations of patients, diagnoses, and therapies to develop intelligent, individualised patient management plans. In March 2023, a round table conference of experts will be held in Brussels, which will discuss one important aspect of this process, i.e., the identification and recognition of specific patient phenotypes within the loose, heterogeneous entities such as sepsis and acute respiratory distress syndrome (ARDS) on which we currently base diagnosis and treatment. With the help of AI, the future of intensive care is rapidly moving toward a personalised medicine approach, which will ultimately improve patient outcomes.